Convex Optimization

Convex Optimization

Convex optimization deals with finding the minimum of a function while ensuring that the search space remains well-behaved. The key requirement is that the objective function is convex and that the feasible region

A convex optimization problem is formally written as:

where:

is a convex function (curves upwards like a bowl). is a convex set, meaning that if two points belong to the set, the entire line segment connecting them is also inside the set.

Local Minimum in Convex Optimization

A local minimum of a function

This means that in a small neighborhood around

Local Minima are Global Minima

One of the most powerful properties of convex functions is that any local minimum is automatically a global minimum.

Lemma 1

If

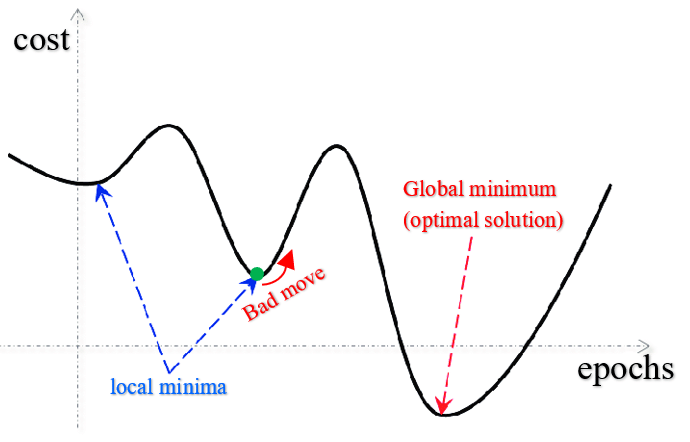

This property makes convex optimization particularly efficient because it eliminates the risk of getting trapped in local minima, unlike in non-convex problems.

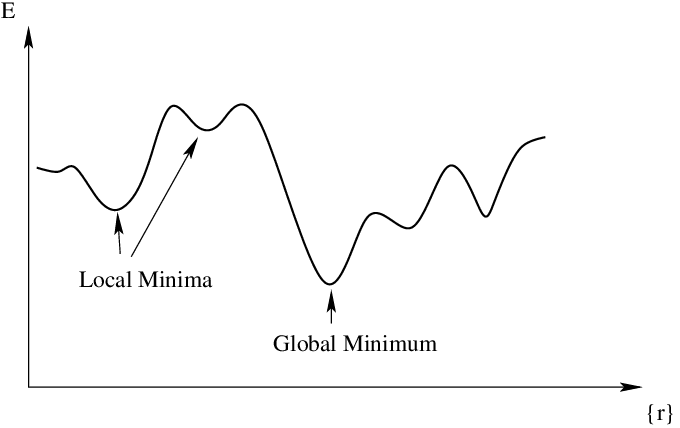

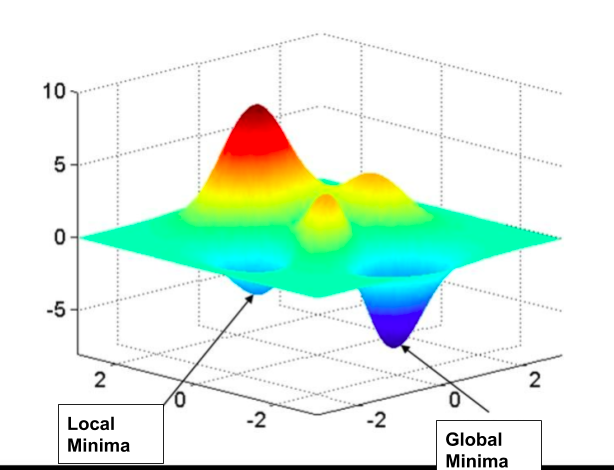

In non-convex functions, local minima can be traps that prevent finding the global minimum.

Critical Points are Global Minima

A critical point of a function is where the gradient (first derivative) is zero. In the case of convex functions, a critical point is always a global minimum.

Lemma 2

If

This means that solving the equation

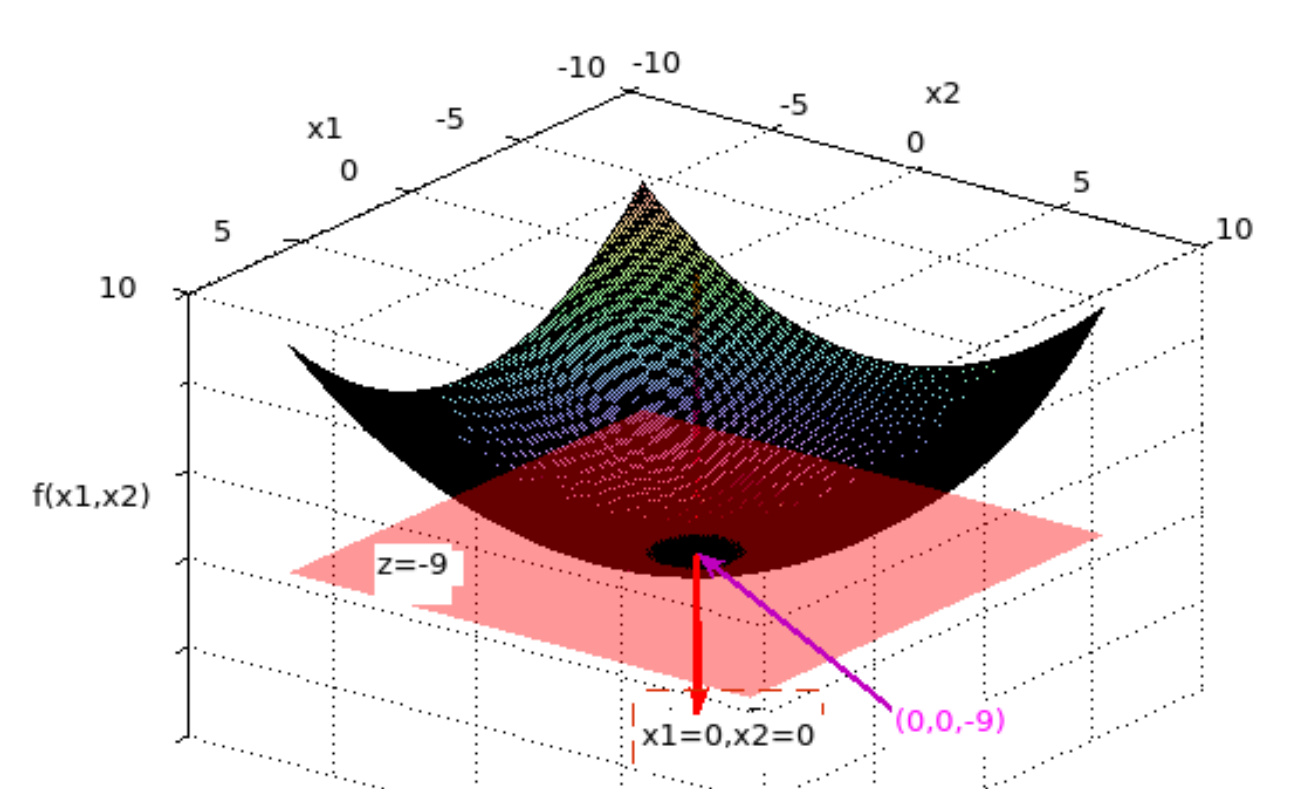

Geometric intuition:

The tangent hyperplane (a generalization of a tangent line in higher dimensions) is horizontal at

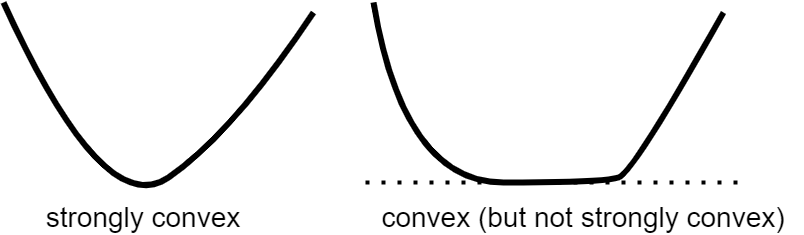

Strictly Convex Functions

A strictly convex function is a convex function where the inequality is strict — the function curves more sharply, ensuring that it has only one minimum.

Lemma 3

If

This property is useful because it guarantees uniqueness, meaning that optimization algorithms will always find the same solution.

Summary

| Concept | Meaning | |

|---|---|---|

| Convex optimization | Minimizing a convex function over a convex set ensures efficient and predictable results. | |

| Local minimum | A point where |

|

| Local = Global | In convex functions, every local minimum is a global minimum. | |

| Critical points | If |

|

| Strictly convex functions | Have at most one global minimum, ensuring uniqueness. |

Convex optimization provides a powerful and reliable framework for finding optimal solutions in various fields, from machine learning to economics. 🚀