Hessian matrix

Hessian matrix: Understanding the second-order behavior of functions

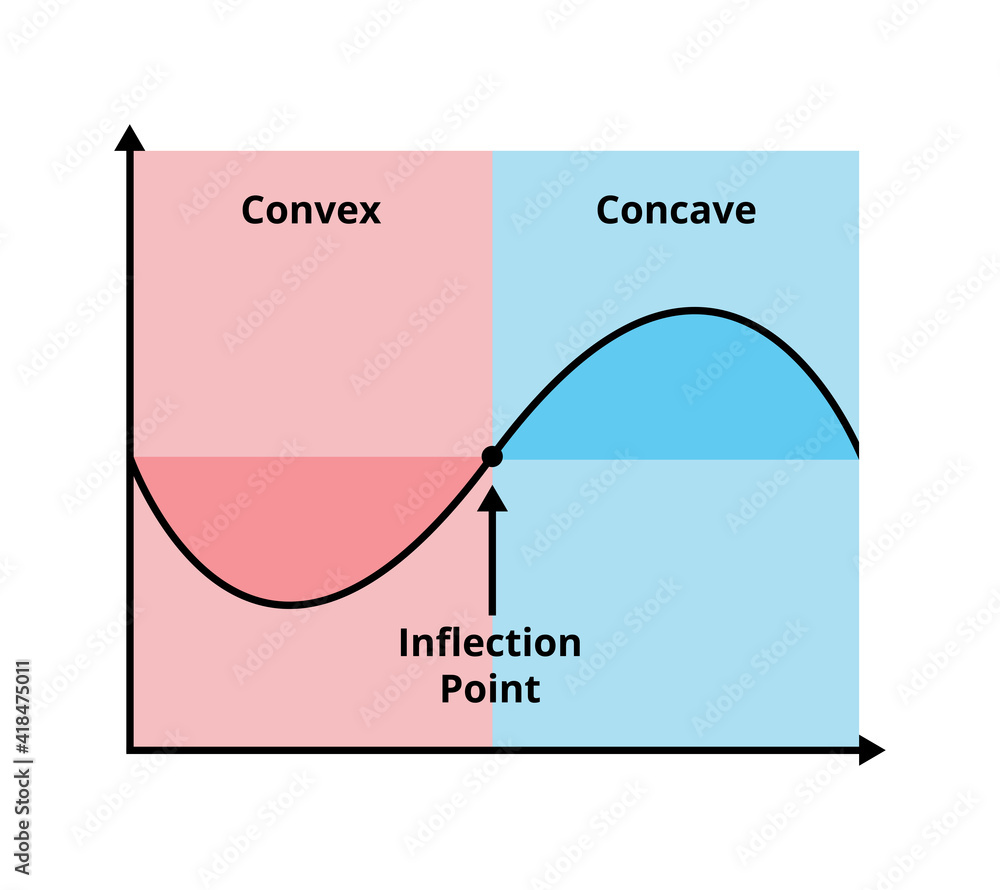

The Hessian matrix is a mathematical tool used to analyze the curvature of a function. It helps us determine whether a function is convex, concave, or neither by looking at its second-order derivatives. In optimization, the Hessian is particularly useful because it tells us if a function has a unique minimum and whether standard optimization methods (like gradient descent) will work efficiently.

What is the Hessian matrix

The Hessian of a function

Each entry of this matrix represents the rate of change of one partial derivative with respect to another variable. The diagonal elements represent how the function curves along each coordinate direction, while the off-diagonal elements describe how the curvature changes between different variables.

Why is the Hessian important

The Hessian matrix is used to:

- Determine convexity: If the Hessian satisfies certain conditions, the function is convex.

- Classify critical points: It tells us whether a point is a local minimum, maximum, or a saddle point.

- Optimize functions: In machine learning, economics, and physics, the Hessian is used to efficiently minimize or maximize functions.

Understanding the Hessian in one dimension (1D case)

In one dimension, the Hessian reduces to a single second derivative:

Here’s how to interpret it:

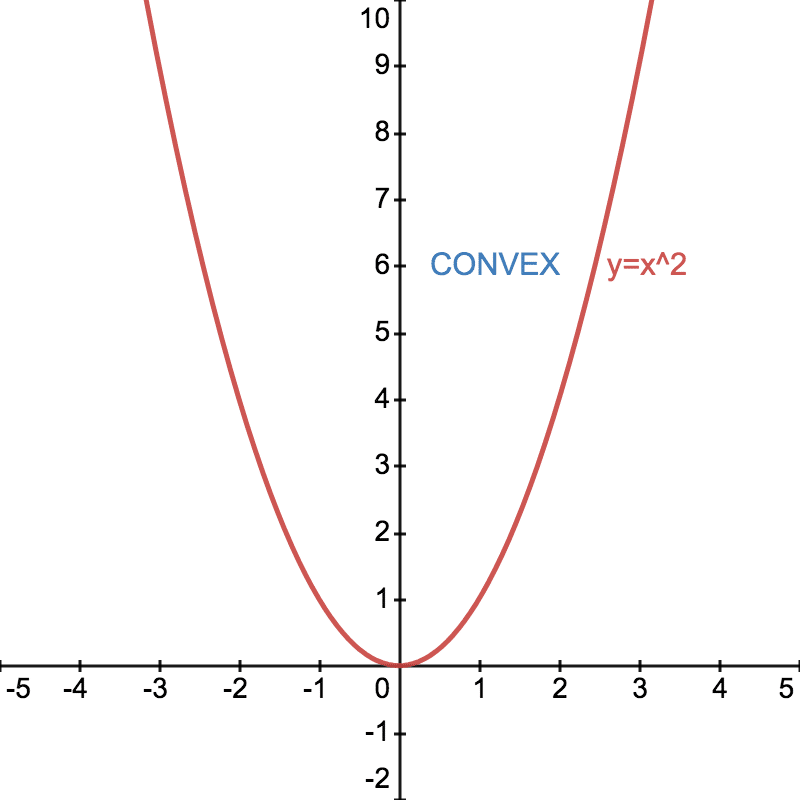

- If

, the function is convex (curves upwards like a bowl). - If

, the function is concave (curves downward like an upside-down bowl). - If

, the function might be linear or have an inflection point (a change in curvature).

Example 1: quadratic function

Consider the function

Its first derivative is:

Its second derivative is:

Since

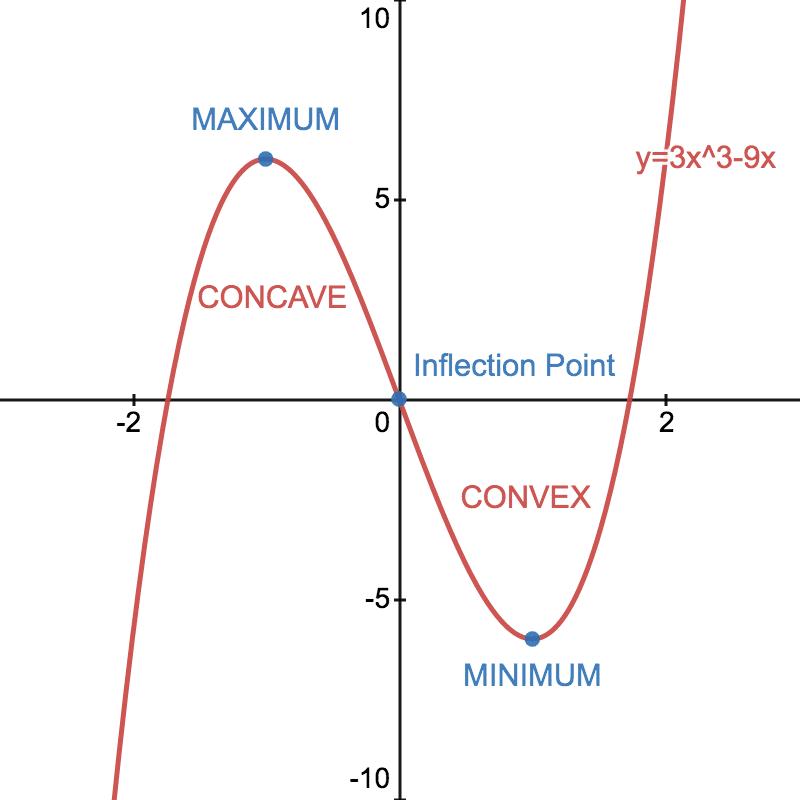

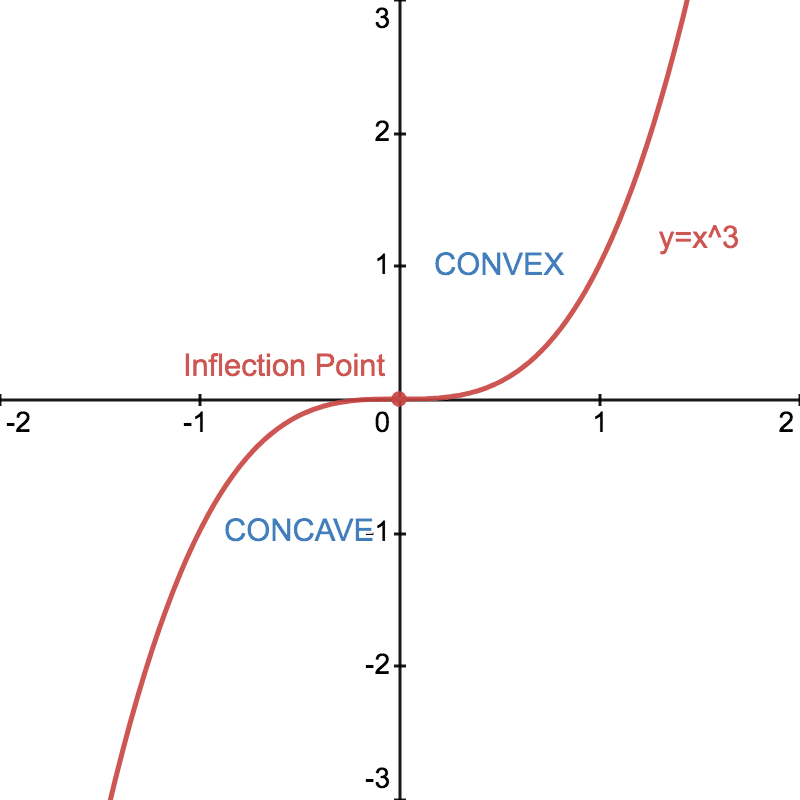

Example 2: cubic function

Consider the function

The first derivative is:

The second derivative is:

This function is not convex everywhere because the second derivative depends on

- For

, we have , so it is convex. - For

, we have , so it is concave. - At

, , meaning the function has an inflection point where it transitions from concave to convex.

This shows that convexity is not just about checking one point — it must hold everywhere.

Understanding the Hessian in two dimensions (2D case)

For a function of two variables

To determine convexity, we check if this matrix is positive semidefinite using the leading principal minors test (or checking all eigenvalues 🤷♀️):

-

The first leading principal minor (the first diagonal element) must be nonnegative:

-

The determinant of the Hessian matrix must be nonnegative:

These two conditions ensure that the function is convex in two dimensions.

Example 1: convex function in 2D

Consider:

First, compute the second derivatives:

The Hessian matrix is:

Check the conditions:

- The first leading principal minor is

✅ - The determinant is

✅

Since both conditions hold, the function is convex.

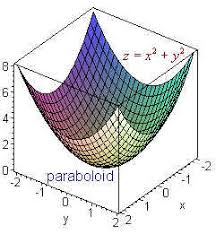

Geometric intuition:

This function represents a bowl-shaped surface in 3D, confirming convexity.

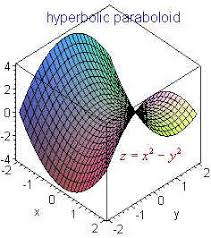

Example 2: non-convex function in 2D

Consider:

The Hessian matrix is:

Check the conditions:

- The first leading principal minor is

✅ - The determinant is

, which is negative ❌

Since the determinant is negative, the Hessian is not positive semidefinite, meaning the function is not convex — it has a saddle point.

Summary of the Hessian Matrix and Convexity

| Dimension | Hessian Form | Convexity Condition |

|---|---|---|

| 1D | ||

| 2D | Determinant test: |

|

| nD | Matrix is positive semidefinite |

So, basically the idea of nD case is the same as 2D case, we need to proove that the Hessian matrix is positive semidefinite. We can choose any method to do that: minors test or eigenvalues test.

Final Takeaways

- The Hessian matrix captures the second-order behavior of a function.

- In one dimension, convexity is determined by checking if

. - In higher dimensions, the function is convex if the Hessian matrix is positive semidefinite.

- The determinant test helps determine convexity in 2D.

- The Hessian is widely used in machine learning, physics, and optimization algorithms to ensure stability and efficiency.